Machine learning is not new and TensorFlow isn’t new either. By open-sourcing the tech, Google has brought the ML domain within the grasp of software engineers. Google is refining TensorFlow’s toolchain continuously but the barrier to entry for developers is still high. Not only the domain of machine learning is tough to wrap a beginner’s head around, TensorFlow itself presents a relatively high upfront complexity for engineers trying out the tech, not to mention building the tools will try hard one’s patience. TensorFlow Serving is meant to address deploying to production environments but the tooling is similarly complex to navigate.

This tutorial is am attempt to shorten the time it takes to deploy a (pre-trained) TensorFlow image recognition model in a web application built around Spring Boot. It circumvents the entire Python and Docker-centered installation requirements and instead uses a pre-trained image recognition model published by Google and the official Tensorflow for Java library to load the model and classify new images.

Run the following in your Terminal/bash (you need to have both git and JDK8 installed):

git clone https://github.com/florind/inception-serving-sb.git

./gradlew fetchInceptionFrozenModel bootrun

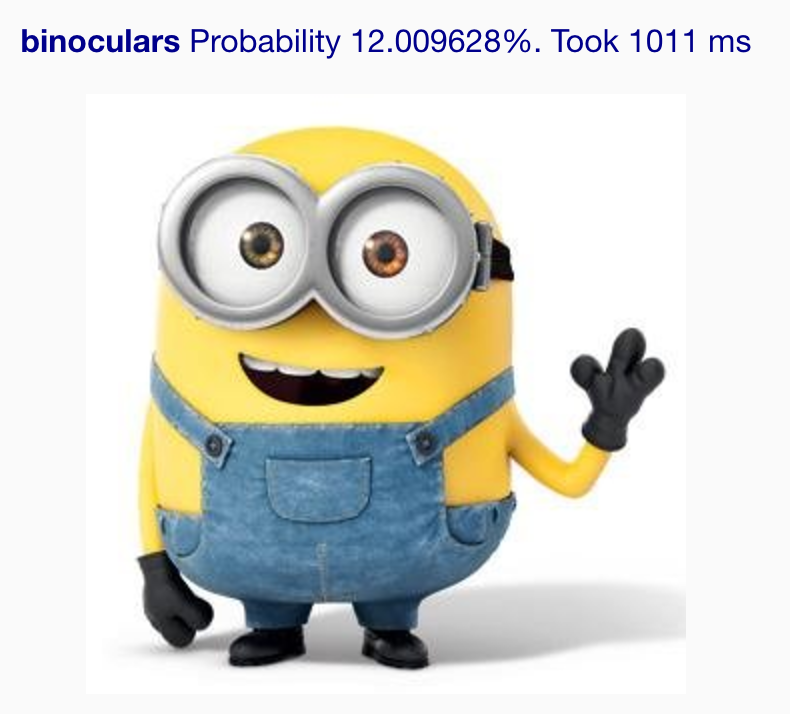

Now browse to http://localhost:8080 and upload an image. The model will classify the image and will report what it has identified in the image alongside a certainty percentage. You should see something like this:

The rest of this post is a dive into details on how such feat was possible and a peek into some of the tech behind TensorFlow.

What just happened?

The code loads a TensorFlow frozen model that has been previously built. This is the eventual output of the so-called fitting and inference (or training&evaluation), a process that takes a computational graph, training and evaluation data, runs the training data through this graph that uses slowly-changing loss functions (gradients) to identify the combination of variables that yield the best results on the training data then runs the resulting model on the evaluation data to check on the accuracy. This trial and error training&evaluation process repeats for a predefined number of steps until a final graph is produced. It is computationally intensive and this is where GPUs provide significant acceleration. This model can then be exported (serialized) and later used to classify (or infer) new data. In our case it will try to identify new images based on what it has learned from the training data.

Google has open-sourced the exporting process as well through a project called TensorFlow Serving. So to start using learned models you just need to have an exported model handy then feed new data on it for classification.

This is exactly what we just did. The pre-trained model I use in this project is called the Inception Model, a high quality image recognition model (again) open-sourced by Google and his graph is trained to recognize amongst 1000 objects including catamarans like the one above. The build script has a step that downloads and unpacks it: ./gradlew fetchInceptionFrozenModel

The project code then loads this graph during application startup and classifies new images based on this loaded graph. The output is the top inference (code here) although the graph computes many more but with decreasing level of probability.

Pre-trained frozen models

An important detail is that the TensorFlow exported model has to be frozen. That means that all variables are replaced by constants and thus “frozen” in the resulting graph which is generated in protobuf format. Along the process of freezing the inception graph, a text file containing the classification labels is also generated. This is important as it allows mapping label IDs that are computed at classification with actual label names (see the labels list lookup after classification here).

You also have to know how to query the graph as well. The tf.outputLayer attribute configured in my project here I’ve actually spotted here which took a while to figure out since I didn’t actually generate the frozen graph myself but took one prepared by the TensorFlow team with no documentation around it. code is good enough if you know what you’re looking for though but still time consuming. Querying the output tensor is also key. I’ve used this code to understand how the graph classifies an image and get the actual useful information (the category label indices).

You can get other models loaded using this code. I recommend either train & freeze graphs yourself (requires real ML skills) or use well documented frozen graphs to allow you to painlessly load and query them.

Retraining

The Inception model can identify one of the 1.000 images that it’s been trained with. Give this tool to kids and they’ll choose the character of the day. A few examples on funny fails:

|

|

|

|

This is why “retraining” exists. It takes an pre-trained model and allows adding new classifications. For the Inception model we can add more images to classify, like cartoon characters, celebrity faces etc. I’d really like to see a flourishing market growing around pre-trained&retrained models, it allows applications to leap directly into employing deep learning (computer vision, NLP etc.) without incurring the upfront cost of the ML itself.

Speed-up image detection

Here are a few tricks to increase the image recognition. It’s still a bit far from real-time computer vision but it can get pretty close with the right hardware.

Graph optimization : Which pre-trained graph is used, counts on performance. It takes more than one second to classify an image on my MacBook with Inception v3. It takes around 3 seconds when I tried Inception v4, frozen from this checkpoint file and the accuracy is not greatly improved I found. Google released MobileNet just last month, a model specifically designed for running on devices. You can also run graph inference optimization to reduce the the amount of computation when inferring. In my tests it boosted 5-10% the speed.

Compiling the TensorFlow JNI for your platform. The TensorFlow jarfile published in Maven is likely not optimized for your platform. Inference uses GPU if available and even if you don’t have the hardware, the native TensorFlow driver can make use of your machine’s hardware to accelerate computations. Warnings like this one The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations.means you should compile the JNI driver for your platform. Howto available here. My tests show an inference speed-up of up to 20%!

Java vs. Python: My empirical tests have shown that running image recognition with the Python-driven TensorFlow Serving toolchain had similar performance with the Java counterpart (I ran the whole thing in Docker though).

Deploy in production

Build a Docker image using the supplied Dockerfile. It safely runs in Kubernetes as well. You can also package a self-contained fat jar using ./gradlew build. The resulting jarfile is in build/libs and you can execute it using the regular java -jar build/libs/inception-serving-sb .jar command.

Why Spring Boot?

Because Java devs can be hugely productive with it and effortlessly follow the best industry practices while at it. Because it takes a minute to build and deploy this webapp and have image detection out of the box. You’ll also notice that the integration test also outputs API documentation through the excellent Spring REST Docs project. Actually the integration test is loading the actual Inception graph and executes image recognition without mocking. The project is using the latest Spring Boot 2.0.0 which enables reactive programming although I’ve used none of that here yet.

Final thoughts

Deploying pre-trained models in production is not difficult although I’d like to see a streamlined way of publishing them beyond Google. To conceive and train any model is the bread and butter of machine learning, requiring expertise, computing power and time. A marketplace of production-ready pre-trained models will flourish only if they are easy to adopt in production.